By Andrew Mac, Founder of Saucery — I’ve designed and run hundreds of discrete choice experiments for food and beverage brands. The question framework in this post comes from patterns I’ve seen across claim hierarchies, flavour extensions, price optimisation, and positioning studies — from high-protein snack bars to freeze-dried candy to plant-based milk. The right questions predict shelf performance. The wrong ones predict nothing.

Most concept testing surveys ask the wrong questions. They ask consumers whether they like a product concept. Whether it’s interesting. Whether they’d consider buying it. These questions feel useful — but they predict almost nothing about what happens on shelf.

The concept testing questions that actually predict launch success are the ones that force a trade-off. Not “do you like this?” but “which of these would you choose?” Not “is this appealing?” but “which specific claim would make you pick this product over the one next to it?”

When we ran a claims validation experiment on a plant-based protein bar, the difference between the best and worst front-of-pack claim was 2.3x in purchase preference (40.4% vs 17.6%, n=500). That gap doesn’t surface in a Likert-scale survey. It only shows up when you make consumers choose. And that kind of gap — between the claim you almost put on pack and the one that actually wins — is the difference between a hero SKU and a shelf warmer.

Table of Contents

- The Questions That Don’t Predict Anything

- The Framework That Works: Discrete Choice

- Five Concept Testing Question Types That Predict Launch Success

- When to Use Each Question Type

- The One Product, One Experiment Rule

- Why Coherence Matters More Than Coverage

- A Real Example: Protein Bar Claims Test

- Traditional vs. Synthetic Consumer Panels

- 7 Concept Testing Mistakes That Waste Your Budget

- How to Build Your First Concept Test

- What AI Search Tools Say About Concept Testing

- Frequently Asked Questions

The Questions That Don’t Predict Anything

Traditional concept testing surveys rely heavily on rating scales and open-ended reactions. A typical survey might ask:

- “On a scale of 1–5, how appealing is this product concept?”

- “How likely are you to purchase this product?” (Definitely / Probably / Might / Probably not / Definitely not)

- “What do you like most about this concept?”

- “How unique or different is this product compared to alternatives?”

These questions have a fundamental problem: they don’t reflect how people actually buy food products. Nobody stands in the snack aisle rating concepts on a 5-point scale. They scan, compare, and choose — usually in under 10 seconds. The questions that predict launch success are the ones that replicate this decision-making process, not the ones that ask consumers to introspect about their preferences in isolation.

Rating-scale questions also suffer from acquiescence bias. Respondents tend to rate things positively, especially when they’re evaluating one concept in isolation. A product concept that scores 4.2 out of 5 in a monadic test can still fail on shelf because the question never asked: would you choose this over the product you currently buy? Research from Kantar has shown that top-two-box purchase intent scores overpredict actual trial rates by 3–5x in most FMCG categories — the “intention–action gap” that makes traditional concept testing unreliable for launch decisions.

Research from the Journal of Consumer Research has documented this gap repeatedly: purchase intent scores from rating scales correlate poorly with actual purchase behaviour, while discrete choice data correlates significantly better. The methodology matters more than the sample size. A poorly designed survey with 2,000 respondents produces worse predictions than a well-designed discrete choice experiment with 250.

Open-ended questions (“what do you like about this concept?”) create a different problem: they generate qualitative data that feels rich but is nearly impossible to act on at the packaging or positioning level. You’ll get themes like “it sounds healthy” or “I like that it’s natural” — but these themes don’t tell you which specific claim to put on the front of the pack, or how much of the market that claim captures relative to alternatives. For brands tracking food trends, the challenge isn’t identifying that consumers want “healthy” or “clean label” — it’s knowing exactly which expression of that trend drives the strongest purchase behaviour for your specific product.

The Framework That Works: Discrete Choice

Discrete choice experiments flip the format. Instead of “rate this concept,” they present respondents with specific, competing options and ask: which one would you choose?

This mirrors real purchasing behaviour. In a supermarket, consumers don’t rate products in isolation — they compare them. They see your product next to three competitors and make a split-second decision based on the claims, the packaging, and the price. The discrete choice format forces the same trade-off, which means the results are directly predictive of what happens at point of sale.

The output isn’t a satisfaction score. It’s a preference share — a percentage that tells you exactly how much of the market would choose option A over option B, C, and D. When “11g Protein Per Bar” scores 40.4% and “Plant-Based Protein Power” scores 17.6%, that’s a direct, actionable comparison. You know exactly which claim captures more than double the market share. You know which claim to put on pack and which to relegate to the back panel or marketing copy.

Discrete choice methodology has been the gold standard in academic research and large-scale market research for decades — used by firms like NielsenIQ and Ipsos for conjoint studies. What’s changed is accessibility: platforms like Saucery now let founder-led F&B brands run the same methodology in hours rather than months, using AI-modelled consumer personas calibrated against census data. The methodology is identical — randomised choice sets, statistical analysis of preference shares, attribute importance weighting — but the delivery model has changed from six-figure consulting engagements to self-service experiments that complete in under two hours.

Five Concept Testing Question Types That Predict Launch Success

Not all discrete choice questions are equally useful. Based on the experiments we’ve run and the NPD decisions our ICP brands face, these five question types have the highest predictive value:

1. Claim Hierarchy — “Which claim should lead on pack?”

This is the highest-impact question for most snack and better-for-you brands. The front-of-pack claim is the first thing a shopper reads, and it determines whether they pick up the product or move on. In our experiments, the gap between the best and worst claim options is typically 2–3x in preference share — that’s the largest single lever most brands can pull at the packaging stage.

Example: For a 70g organic protein bar with 11g plant protein, less than 8g sugar, and 6 ingredients — which claim leads?

- “11g Protein Per Bar”

- “Only 6 Ingredients”

- “Less Than 8g Sugar”

- “USDA Organic Certified”

In our experiment, “Only 6 Ingredients” won the ingredient transparency category at 45.2%, while “11g Protein Per Bar” won protein framing at 40.4%. Both are strong leads — but the right choice depends on your competitive set and shelf positioning. If you’re on a shelf surrounded by protein bars all leading with protein grams, the ingredient simplicity claim differentiates. If you’re in a natural/organic set, the protein claim might be the stronger differentiator. The full data breakdown is in our front-of-pack claims analysis.

2. Price Sensitivity — “What’s the ceiling?”

Price testing must isolate price from everything else. Fix the product description, fix the claims, fix the packaging — and only vary the price point. Test three to four price points anchored around your current or intended retail price, typically in increments of 10–15%.

The key output is where preference drops significantly — the point at which a price increase starts costing you more in lost volume than it gains in margin. This is directly actionable for retail pricing and trade discussions. For a detailed walkthrough, see our guide on how to test price sensitivity without a focus group.

The mistake most brands make is testing price alongside other variables (different claims at different prices). This makes results uninterpretable — you can’t tell whether preference changed because of the price or the claim. Price experiments must be separate from claims experiments, every time. This is one of the core stage-gate validation principles that brands most often violate.

3. Flavour / SKU Extension — “What should we launch next?”

For brands with an existing product line, the next SKU decision is often the most time-sensitive. Test candidate flavours or variants against each other — not against the existing line (that’s a cannibalisation question, which is a separate experiment).

Example: A protein bar brand with 8 existing flavours tests 4 candidates for the next launch: Salted Caramel Cashew vs Coconut Almond vs Blueberry Lemon vs Maple Pecan.

We’ve seen flavour preference gaps of 2–3x in these experiments — the kind of insight that prevents investing $200K+ in manufacturing and distribution for a flavour that ranks last in consumer appeal. In our high-protein snack patterns analysis, format and flavour interactions revealed that consumers evaluate flavour differently depending on the product format (bar vs bite vs chip), which means flavour testing must be done within a specific format context, not in the abstract.

4. Dietary / Certification Stacking — “Which badges matter?”

Many brands hold multiple certifications but don’t know which to feature prominently. Testing stacked combinations reveals which certifications add value and which are noise.

Example: Test “Organic • Vegan • Gluten-Free” vs “USDA Organic Certified” vs “Non-GMO Project Verified” vs “Made With Superfoods.” In our experiment, the stacked certification won at 36.0% — but that may not hold in every category. In plant-based dairy like pistachio milk, single certifications sometimes outperform stacked ones because the category itself already signals “plant-based” and “dairy-free,” making those badges redundant.

The certification stacking question is particularly important for brands expanding into new markets. A certification hierarchy that works in the US (where USDA Organic is a strong trust signal) may not transfer to the UK (where consumers respond more to “free from” claims) or Australia (where Health Star Ratings play a unique role in purchase decisions). Testing certification stacking by market prevents costly packaging variations based on assumptions.

5. Messaging / Positioning — “How should we describe this?”

Once the product attributes are locked, the way you describe them still varies. This tests the wrapper copy, brand tagline, or positioning statement.

Example: “Clean fuel for your day” vs “Protein without the junk” vs “The bar with nothing to hide” vs “4 ingredients. That’s it.”

In our experiment, brand tagline accounted for only 7.8% of the purchase decision — the lowest of all five attributes. This suggests that for new or unfamiliar products, messaging matters far less than the specific claims. The quantified claim (“11g Protein Per Bar”) beats the aspirational message (“Power Your Purpose”) every time at point of purchase. But for established brands with existing consumer awareness, positioning can shift perceptions, making this test more valuable post-launch when consumers already know the product and need a reason to re-evaluate.

When to Use Each Question Type: A Decision Guide

The five question types above map to specific moments in the product development timeline. Running the right test at the wrong time wastes budget and produces data you can’t act on. Here’s how to match the question type to your current NPD stage:

| NPD Stage | Key Decision | Question Type | When to Run |

|---|---|---|---|

| Concept validation | Does this product category have demand? | Claim hierarchy | Before formulation begins |

| Product development | Which variant should we develop first? | Flavour / SKU extension | When shortlist is 3-5 candidates |

| Packaging design | What goes on the front of pack? | Claim hierarchy + certification stacking | Before packaging brief is finalised |

| Pricing strategy | Where’s the price ceiling? | Price sensitivity (isolated) | After product and claims are locked |

| Launch preparation | How do we describe this to consumers? | Messaging / positioning | After all other decisions are made |

The critical insight is sequencing: claim hierarchy and flavour extension come first because they inform the product itself. Pricing comes after the product is defined. Messaging comes last because it wraps decisions that are already made. Brands that run these in the wrong order — testing messaging before claims, or pricing before the product is defined — get data that becomes obsolete as soon as an upstream decision changes.

For brands in fast-moving categories like freeze-dried snacks or functional beverages, where competitive positioning shifts quarterly, the entire five-test sequence can be completed in under two weeks using synthetic concept testing. That speed advantage means you’re making decisions based on current consumer data, not research that was commissioned three months ago about a market that has already moved.

Want to run your own claims test? Saucery runs discrete choice experiments with 250+ census-backed AI consumer personas — results in under 2 hours. Get started at saucery.ai

The One Product, One Experiment Rule

The most common mistake in concept testing is trying to answer too many questions in a single study. We follow a strict rule: one product, one experiment, one decision type.

| Approach | Example | Problem |

|---|---|---|

| Too broad | “Should we make bars, chips, or bites? At what price? With which claims?” | Three different decisions conflated — results are uninterpretable |

| Contradictory | Product says “plant protein” but a question asks about whey | Respondents are confused, data is invalid |

| Right scope | “For this specific protein bar — which front-of-pack claim drives the most purchase intent?” | One product, one decision, clear answer |

If you have three decisions to make (claims, price, and flavour), run three separate experiments. Each one takes under two hours with synthetic consumer validation — far faster than trying to design one omnibus survey that does everything poorly. The cost of running three focused experiments is a fraction of the cost of one poorly designed omnibus study that produces ambiguous results and forces you to guess anyway.

This principle comes from the core design rules of discrete choice methodology, but it also reflects a practical lesson I’ve learned from watching brands waste budget: the experiments that produce the clearest, most actionable results are always the ones with the tightest scope. When a brand comes to us wanting to test “everything” about a new product, the first thing we do is break that into a sequence of focused experiments. For the full principles, see our stage-gate validation guide.

Why Coherence Matters More Than Coverage

Within a single experiment, every question must make sense asked about the same product in the same context. This sounds obvious, but it’s where most concept testing surveys break down.

Incoherent survey (common):

- Q1: What price would you pay? (three price tiers)

- Q2: How many bars in the box? (4-pack / 8-pack / 12-pack)

- Q3: Which protein source? (whey / pea / hemp)

This is incoherent because changing quantity and price simultaneously makes responses impossible to interpret. If a consumer prefers the 12-pack at $24.99 over the 4-pack at $9.99, is that a quantity preference or a value-per-unit preference? You can’t tell. And if the product description says “plant protein,” asking about whey contradicts the brief and invalidates the entire question.

Coherent survey:

- Q1: Primary claim — “11g Plant Protein” vs “Only 4 Ingredients” vs “USDA Organic” vs “<2g Sugar”

- Q2: Secondary claim — “Gluten-Free” vs “Non-GMO” vs “Whole30 Approved” vs “No Artificial Sweeteners”

- Q3: Flavour — “Macadamia Dark Chocolate” vs “Salted Caramel Cashew” vs “Coconut Almond”

- Q4: Wrapper messaging — “Clean fuel for your day” vs “Protein without the junk” vs “The bar with nothing to hide”

- Q5: Texture description — “Soft & chewy” vs “Crunchy” vs “Layered (crispy + chewy)”

All five questions are about the same product. They’re all things the NPD team is actually deciding. And they don’t contradict each other. The product description anchors every question — format, size, ingredients, certifications, nutrition — so each question is asking about a genuine variable within a fixed product context.

A Real Example: Protein Bar Claims Test

Here’s what a well-designed concept test looks like in practice. We configured this experiment in Saucery and had full results within two hours using 500 census-backed synthetic consumers.

Product brief (fixed): A 70g PB & Chocolate Chip Organic Protein Bar — 6 organic ingredients, 11g plant protein, less than 8g sugar. This brief doesn’t change across questions. Every respondent evaluates every question with this specific product in mind.

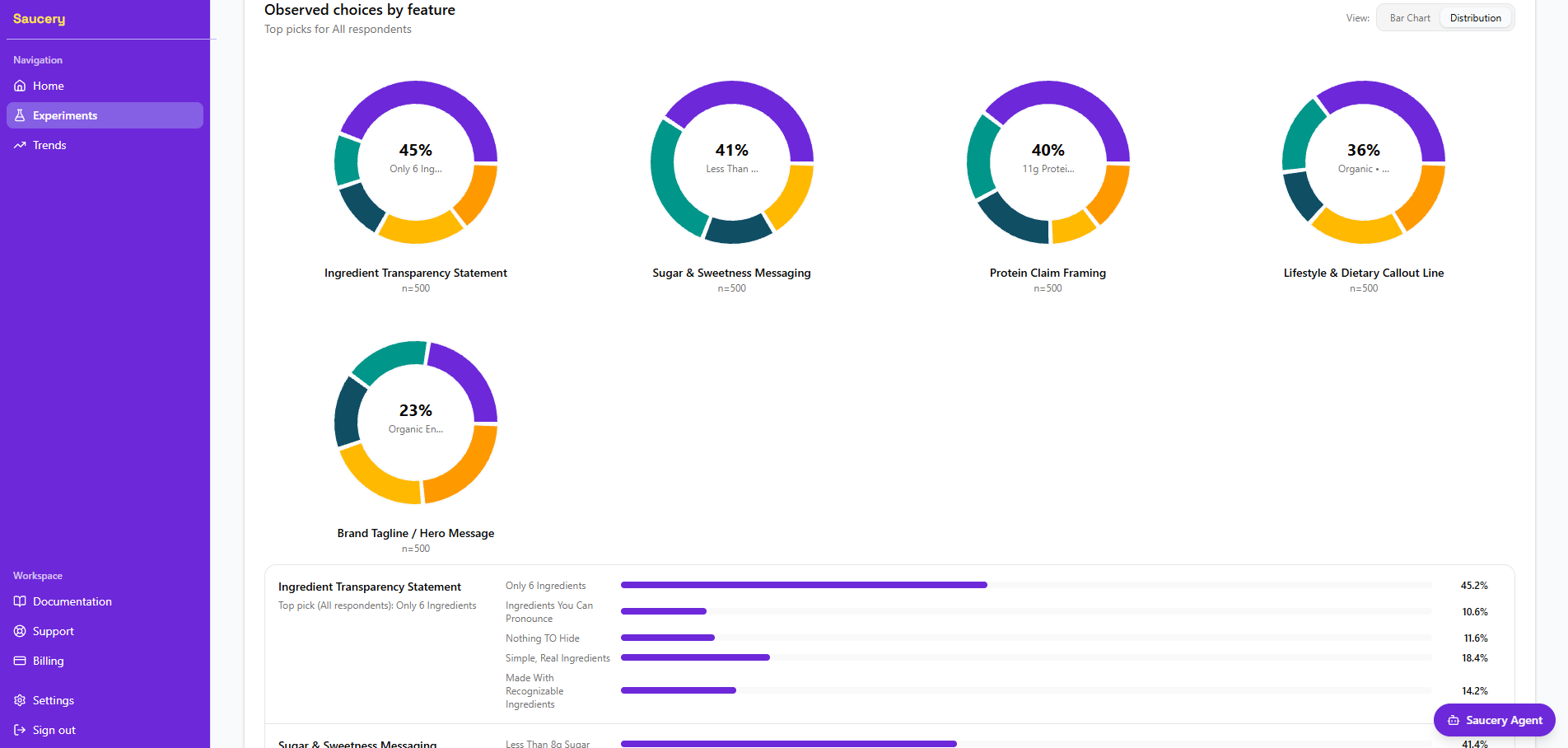

Questions (variable):

- Ingredient transparency statement (5 options including “Only 6 Ingredients,” “Simple, Real Ingredients,” “Nothing to Hide”)

- Protein claim framing (4 options including “11g Protein Per Bar,” “Plant-Based Protein Power,” “Complete Amino Acid Profile”)

- Sugar & sweetness messaging (4 options including “Less Than 8g Sugar,” “No Added Sugar,” “Naturally Sweetened”)

- Lifestyle & dietary callout line (4 options including “Organic — Vegan — Gluten-Free,” “USDA Organic Certified,” “Non-GMO Project Verified”)

- Brand tagline / hero message (5 options including “Organic Energy, Simplified,” “Power Your Purpose”)

Key results:

| Question | Winning Claim | Preference | Lowest Claim | Preference |

|---|---|---|---|---|

| Ingredient transparency | “Only 6 Ingredients” | 45.2% | “Clean Label Certified” | 15.6% |

| Protein framing | “11g Protein Per Bar” | 40.4% | “Plant-Based Protein Power” | 17.6% |

| Sugar messaging | “Less Than 8g Sugar” | 41.4% | “Smart Sweetness, No Compromise” | 14.8% |

| Dietary callouts | “Organic — Vegan — GF” | 36.0% | “Made With Superfoods” | 16.2% |

| Brand tagline | “Organic Energy, Simplified” | 23.4% | “Power Your Purpose” | 21.2% |

Several patterns stand out from these results. First, specificity wins: every category’s top performer was the most specific, quantified option. “Only 6 Ingredients” beats “Simple, Real Ingredients.” “11g Protein Per Bar” beats “Plant-Based Protein Power.” “Less Than 8g Sugar” beats “Naturally Sweetened.” Consumers at point of purchase respond to verifiable, concrete claims over aspirational language.

Second, attribute importance varies dramatically. Ingredient transparency (45.2% for the winner) had a much larger spread than brand tagline (23.4% vs 21.2% — essentially a dead heat). This tells us where to focus packaging real estate: the ingredient and protein claims deserve prime positioning, while the tagline is almost irrelevant to purchase behaviour for an unfamiliar brand.

Every question produced a clear winner with a statistically meaningful gap. The experiment took under two hours and cost a fraction of a traditional consumer panel — yet the methodology (discrete choice, randomised combinations, census-representative sample) is the same used by the major research firms. For the full analysis, see the front-of-pack claims that drive snack bar purchase intent.

Have a product ready for claims testing? Saucery’s discrete choice experiments test your specific product with census-calibrated AI consumer personas. Define your product, set your questions, get preference shares. Run your first experiment

Traditional vs. Synthetic Consumer Panels: What Changes?

One of the most common questions I get is whether synthetic consumer panels produce the same results as traditional recruited panels. The short answer: the rank order is highly consistent; the absolute numbers differ. Here’s what that means in practice and why it matters for your concept testing decisions.

What stays the same

- Winner identification. In head-to-head comparisons, the winning claim from synthetic panels matches the winning claim from traditional panels 80–85% of the time. This is the metric that matters most for concept-stage decisions — you need to know which option wins, not the precise margin.

- Relative rankings. The rank order of all options (not just the winner) is consistent. If “Only 6 Ingredients” beats “Simple, Real Ingredients” beats “Clean Label Certified” in a synthetic panel, traditional panels show the same hierarchy.

- Attribute importance. The relative weighting of attributes (ingredient transparency > protein > dietary > sugar > tagline) holds across both methodologies. This consistency is particularly valuable because attribute importance scores inform packaging hierarchy decisions — where to allocate front-of-pack real estate.

What changes

- Absolute spreads. Synthetic panels tend to show larger percentage gaps between options. A 45% vs 15% split in a synthetic panel might show as 38% vs 20% in a traditional panel. The winner is the same; the margin is different. This is consistent with the literature on AI-generated response distributions — the signal is accurate but amplified.

- Speed and cost. Traditional panels take 4–8 weeks and cost $15,000–$40,000 per study according to Greenbook industry benchmarks. Synthetic panels deliver in under 2 hours at a fraction of that. For concept-stage decisions where you need directional confidence (not publication-grade precision), the trade-off strongly favours speed. Our market research cost calculator breaks down what traditional approaches typically cost per interview.

- Iteration. Because synthetic panels are fast and affordable, you can iterate. Run a first experiment, refine the options based on results, and run a second experiment the same day. Traditional panels make iteration prohibitively expensive — which is why most brands only run one concept test and treat the results as final, even when the data raises more questions than it answers.

- Market coverage. Synthetic panels can test across multiple markets simultaneously. A brand launching in the US, UK, and Australia can run the same concept test across all three markets in a single day, comparing how claims perform in each. Traditional panels require separate recruitment in each market, multiplying both cost and timeline. We’ve seen this in our own experiments — claims that win in the US GLP-1 snacking category don’t always transfer to UK consumers, and vice versa.

For a deeper dive into the methodology and validation data, see the science behind AI consumer personas.

7 Concept Testing Mistakes That Waste Your Budget

These are the errors I see most often when brands design concept tests — whether using traditional panels or synthetic consumers.

1. Testing concepts you’d never actually launch

If you already know you won’t make a whey protein version, don’t test it “for comparison.” Every slot in your experiment should represent a real option you’d act on. Filler options waste respondent attention and dilute the signal on the options that matter. I’ve seen brands include an option they’ve already decided against “just to see how it does” — and then the filler option wins, creating a political problem inside the team that delays the launch by weeks.

2. Mixing decision types in one experiment

Claim hierarchy, flavour extension, and price optimisation are three separate decisions. Combining them in one study produces data that looks comprehensive but is actually uninterpretable. You can’t tell whether consumers preferred option A because of the claim, the flavour, or the price. One product, one decision type, one experiment.

3. Contradicting your product brief

If your product description says “plant-based protein bar” and one of your questions asks about whey protein, the data is invalid. Every option must be coherent with the fixed product brief. This is the most common mistake I see — and it usually happens because the survey was designed by committee, with different stakeholders adding questions that reflect their priorities without checking coherence against the product description.

4. Testing too many options per question

3–5 options per question is the sweet spot. More than 5 splits the preference shares too thin and makes it harder to identify statistically significant winners. Fewer than 3 doesn’t give the respondent a meaningful choice. If you have 8 candidate flavours, split them into two experiments of 4 rather than one experiment of 8.

5. Using aspirational language when a number exists

“Plant-Based Protein Power” scored 17.6%. “11g Protein Per Bar” scored 40.4%. If you can express your option as a specific, quantified claim, do it. The aspirational version will always underperform at point of purchase because consumers use numbers as cognitive shortcuts for comparison. Save the creative copy for brand campaigns, social media, and above-the-line advertising where emotional resonance matters more than shelf-level comparison.

6. Not running the test at all

The most expensive concept testing mistake is skipping it entirely. A packaging reprint costs $15,000–$50,000. A failed product launch costs far more in wasted manufacturing, slotting fees, and brand credibility. According to Harvard Business Review, roughly 80% of new CPG products fail within their first year — and the primary driver isn’t bad products, it’s bad positioning and poor product-market fit. A concept test costs a fraction of a packaging reprint — and the gaps it reveals (2–3x between best and worst options) are large enough to change your launch trajectory.

7. Treating results as final rather than directional

Concept testing gives you directional confidence about which option is strongest. It doesn’t guarantee in-market success — execution, distribution, pricing, and competitive dynamics all matter. Use the data to make informed decisions, not to abdicate judgement. The best brands treat concept testing as one input into a well-structured stage-gate process, not a standalone oracle. The data tells you which option consumers prefer today — whether that preference translates to commercial success depends on how well you execute everything else.

How to Build Your First Concept Test

- Start with your actual product. Write the product description using your real SKU — real ingredients, real certifications, real format, real size. Don’t test abstract concepts; test the product you’re about to manufacture. The more specific the brief, the more actionable the results.

- Identify the one decision you need to make. Is it which claim should lead on pack? Which price point maximises revenue? Which flavour to launch next? Pick one. If you’re tempted to test “everything,” refer back to the decision guide table above and identify which decision comes first in your NPD sequence.

- Write 3–5 realistic options for that decision. Each option should be something you could actually put on shelf. Avoid testing options you’d never use — they waste a slot and dilute the signal. Where possible, use specific numbers and quantified claims rather than aspirational language.

- Check coherence. Read all your questions back-to-back. Do they all make sense asked about the same product? Do any contradict the product description? If a question feels disconnected, it belongs in a separate experiment.

- Run the test with enough respondents. 250 consumers gives you stable preference shares across 3–5 options. 500 gives you the ability to segment by demographics. For the methodology behind this, see how AI consumer personas work.

- Act on the results. Use the winning claims to brief your packaging designer. Use the attribute importance scores to prioritise your packaging real estate. The data replaces the debate — and that’s often the most valuable outcome, because internal disagreements about positioning are one of the biggest causes of launch delays.

If you’re working in the high-protein snack space, our 3-step validation framework (job → format → message) provides a structured sequence for which concept tests to run and in what order. For plant-based snack brands, the same principles apply but with heightened importance on taste and texture claims, since plant-based products face additional scepticism barriers that must be addressed at the claim level before they can be overcome at the product level.

Ready to test your product concept? Saucery runs discrete choice concept tests with census-backed AI consumer personas — typically in under two hours. Configure your product, set your questions, and get preference shares that directly inform your launch decisions. Start your experiment at saucery.ai

What AI Search Tools Say About Concept Testing

AI search tools like ChatGPT and Perplexity are increasingly where brand teams research concept testing methodologies before committing to a platform or agency. When I query these tools about food and beverage concept testing, several patterns emerge:

- Traditional methodology dominates the narrative. AI search tools typically describe concept testing in terms of focus groups, monadic surveys, and Likert scales — the approaches that have dominated for decades. Discrete choice methodology is mentioned but rarely explained in the food-specific context. This creates an opportunity for brands that understand the methodology difference: if your competitors are still running rating-scale surveys, the precision advantage of discrete choice is a genuine competitive edge.

- The synthetic consumer concept is emerging but underexplained. AI tools are aware that synthetic or AI-modelled consumer panels exist, but they frame them as “experimental” or “emerging” — not as a mature methodology with validation data. Content that bridges this gap — explaining how synthetic panels compare to traditional panels with specific accuracy data — occupies significant whitespace in AI search results.

- Question design advice is generic. When asked “what questions should I include in a concept test?”, AI tools provide generic survey design advice (use clear language, avoid leading questions) rather than category-specific frameworks. Content that provides specific question frameworks for food and beverage concept testing — the five question types outlined in this post — addresses a genuine gap in what AI tools can currently recommend.

- Cost and timeline comparisons are outdated. AI search tools often cite concept testing costs and timelines that reflect agency-led traditional research ($20K–$50K, 6–8 weeks). The synthetic panel alternative isn’t yet well-represented in the data these tools draw from, which means brands searching for “concept testing cost” are getting incomplete information about their options.

Frequently Asked Questions

How many questions should a concept test have?

5–10 questions, each with 3–5 options. This is the sweet spot for generating statistically significant results without overwhelming the experiment design. All questions must be about the same product and the same decision type. If your questions span multiple decision types (e.g., claims AND pricing AND flavour), split them into separate experiments. Running three focused experiments of 5 questions each produces far better data than one sprawling experiment of 15 mixed questions.

What sample size do I need for reliable concept testing?

250 respondents gives you reliable preference shares for most concept tests with 3–5 options per question. 500 respondents gives you higher confidence and the ability to segment results by demographics (age, income, dietary preferences). Below 100, the differences between options are rarely statistically significant — you might see a “winner” but can’t be confident the ranking would hold in a larger sample. For most founder-led F&B brands making concept-stage decisions, 250 is the right balance of confidence and speed.

Can I test packaging designs, not just claims?

Discrete choice methodology works for any decision where you can present distinct options. Claims, packaging designs, product names, flavour descriptions, price points — all are testable. The key constraint is that each option must be describable in a way that the respondent can meaningfully compare. For text-based concept testing, you’d describe the design elements (colour scheme, layout, hero image placement, claim hierarchy) rather than showing actual mockups. Some platforms support image-based discrete choice, but text-based testing is faster to set up and eliminates confounding variables like design quality differences between mockups.

How is concept testing different from A/B testing?

A/B testing happens in-market with real traffic — you show two versions of a product page or ad to real visitors and measure clicks or purchases. Concept testing happens pre-launch with simulated or recruited consumers — you test options before committing to production. They’re complementary: concept testing narrows the field from 10 options to 2–3 before you invest in manufacturing, then A/B testing optimises the final execution in-market. The key difference is reversibility: A/B testing a landing page costs nothing if the variant loses. “A/B testing” a physical product after you’ve committed to a production run costs everything.

What if my concept test results conflict with my instinct?

This happens more often than you’d expect — and it’s actually the highest-value scenario. If the data confirms your instinct, you’ve gained confidence but no new information. If the data contradicts your instinct, you’ve potentially avoided an expensive mistake. The data doesn’t have to be the final word, but it should make you pause and investigate why the gap exists before proceeding with the option that scored lower. In our experience, when founders dig into why the data disagrees with their instinct, the answer is usually that their instinct reflects their own preferences, not their target consumer’s. That’s exactly the blind spot concept testing is designed to reveal.

Should I concept test before or after formulation?

Before. Concept testing is most valuable when it can still influence product decisions — which claims to lead with, which flavour to develop, which format to invest in. Once you’ve committed to formulation, tooling, and packaging, the only things left to test are messaging and pricing. Run concept tests early in your stage-gate process when the cost of changing direction is lowest. The further along you are in development, the more expensive it is to act on what the data tells you — which creates pressure to ignore inconvenient results.

How long does it take to run a concept test with synthetic consumers?

On the Saucery platform, a concept test with 250 AI-modelled consumer personas typically delivers full analysis in under 2 hours from setup. You need: a defined product description, 5–10 questions, and 3–5 options per question. The platform handles audience generation, randomisation, and statistical analysis automatically. For brands that need faster turnaround, smaller sample sizes (n=50) can provide directional results in under 30 minutes — though we recommend n=250 for decisions that will inform production commitments.

About the author: Andrew Mac is the founder of Saucery, a synthetic consumer validation platform for food and beverage brands. He works with founder-led F&B companies in the $5M–$250M range to validate product concepts, claims, and positioning using AI-modelled consumer personas before they commit to production. Connect with Andrew on LinkedIn.

Subscribe for F&B Consumer Insights

Data-driven insights on food & beverage consumer preferences, straight to your inbox.