Most concept testing surveys ask the wrong questions. They ask consumers whether they like a product concept. Whether it’s interesting. Whether they’d consider buying it. These questions feel useful — but they predict almost nothing about what happens on shelf.

The concept testing questions that actually predict launch success are the ones that force a trade-off. Not “do you like this?” but “which of these would you choose?” Not “is this appealing?” but “which specific claim would make you pick this product over the one next to it?”

When we ran a claims validation experiment on a plant-based protein bar, the difference between the best and worst front-of-pack claim was 2.3x in purchase preference (40.4% vs 17.6%, n=500). That gap doesn’t surface in a Likert-scale survey. It only shows up when you make consumers choose.

Table of Contents

- The Questions That Don’t Predict Anything

- The Framework That Works: Discrete Choice

- Five Concept Testing Question Types That Predict Launch Success

- The One Product, One Experiment Rule

- Why Coherence Matters More Than Coverage

- A Real Example: Protein Bar Claims Test

- How to Build Your First Concept Test

The Questions That Don’t Predict Anything

Traditional concept testing surveys rely heavily on rating scales and open-ended reactions. A typical survey might ask:

- “On a scale of 1-5, how appealing is this product concept?”

- “How likely are you to purchase this product?” (Definitely / Probably / Might / Probably not / Definitely not)

- “What do you like most about this concept?”

- “How unique or different is this product compared to alternatives?”

These questions have a fundamental problem: they don’t reflect how people actually buy food products. Nobody stands in the snack aisle rating concepts on a 5-point scale. They scan, compare, and choose — usually in under 10 seconds.

Rating-scale questions also suffer from acquiescence bias. Respondents tend to rate things positively, especially when they’re evaluating one concept in isolation. A product concept that scores 4.2 out of 5 in a monadic test can still fail on shelf because the question never asked: would you choose this over the product you currently buy?

The Framework That Works: Discrete Choice

Discrete choice experiments flip the format. Instead of “rate this concept,” they present respondents with specific, competing options and ask: which one would you choose?

This mirrors real purchasing behaviour. In a supermarket, consumers don’t rate products in isolation — they compare them. The discrete choice format forces the same trade-off, which means the results are directly predictive of what happens at point of sale.

The output isn’t a satisfaction score. It’s a preference share — a percentage that tells you exactly how much of the market would choose option A over option B, C, and D. When “11g Protein Per Bar” scores 40.4% and “Plant-Based Protein Power” scores 17.6%, that’s a direct, actionable comparison.

Five Concept Testing Question Types That Predict Launch Success

Not all discrete choice questions are equally useful. Based on the experiments we’ve run and the NPD decisions our ICP brands face, these five question types have the highest predictive value:

1. Claim Hierarchy — “Which claim should lead on pack?”

This is the highest-impact question for most snack and better-for-you brands. The front-of-pack claim is the first thing a shopper reads, and it determines whether they pick up the product or move on.

Example: For a 70g organic protein bar with 11g plant protein, less than 8g sugar, and 6 ingredients — which claim leads?

- “11g Protein Per Bar”

- “Only 6 Ingredients”

- “Less Than 8g Sugar”

- “USDA Organic Certified”

In our experiment, “Only 6 Ingredients” won the ingredient transparency category at 45.2%, while “11g Protein Per Bar” won protein framing at 40.4%. Both are strong leads — but the right choice depends on your competitive set and shelf positioning.

2. Price Sensitivity — “What’s the ceiling?”

Price testing must isolate price from everything else. Fix the product description, fix the claims, fix the packaging — and only vary the price point. Test three to four price points anchored around your current or intended retail price, typically in increments of 10–15%.

The key output is where preference drops significantly — the point at which a price increase starts costing you more in lost volume than it gains in margin. This is directly actionable for retail pricing and trade discussions.

The mistake most brands make is testing price alongside other variables (different claims at different prices). This makes results uninterpretable — you can’t tell whether preference changed because of the price or the claim.

3. Flavour / SKU Extension — “What should we launch next?”

For brands with an existing product line, the next SKU decision is often the most time-sensitive. Test candidate flavours or variants against each other — not against the existing line (that’s a cannibalisation question, which is a separate experiment).

Example: A protein bar brand with 8 existing flavours tests 4 candidates for the next launch: Salted Caramel Cashew vs Coconut Almond vs Blueberry Lemon vs Maple Pecan.

4. Dietary / Certification Stacking — “Which badges matter?”

Many brands hold multiple certifications but don’t know which to feature prominently. Testing stacked combinations reveals which certifications add value and which are noise.

Example: Test “Organic • Vegan • Gluten-Free” vs “USDA Organic Certified” vs “Non-GMO Project Verified” vs “Made With Superfoods.” In our experiment, the stacked certification won at 36.0% — but that may not hold in every category.

5. Messaging / Positioning — “How should we describe this?”

Once the product attributes are locked, the way you describe them still varies. This tests the wrapper copy, brand tagline, or positioning statement.

Example: “Clean fuel for your day” vs “Protein without the junk” vs “The bar with nothing to hide” vs “4 ingredients. That’s it.”

In our experiment, brand tagline accounted for only 7.8% of the purchase decision — the lowest of all five attributes. This suggests that for new or unfamiliar products, messaging matters far less than the specific claims. But for established brands, positioning can shift existing perceptions, making this test more valuable post-launch.

The One Product, One Experiment Rule

The most common mistake in concept testing is trying to answer too many questions in a single study. We follow a strict rule: one product, one experiment, one decision type.

| Approach | Example | Problem |

|---|---|---|

| Too broad | “Should we make bars, chips, or bites? At what price? With which claims?” | Three different decisions conflated — results are uninterpretable |

| Contradictory | Product says “plant protein” but a question asks about whey | Respondents are confused, data is invalid |

| Right scope | “For this specific protein bar — which front-of-pack claim drives the most purchase intent?” | One product, one decision, clear answer |

If you have three decisions to make (claims, price, and flavour), run three separate experiments. Each one takes under two hours with synthetic consumer validation — far faster than trying to design one omnibus survey that does everything poorly.

Why Coherence Matters More Than Coverage

Within a single experiment, every question must make sense asked about the same product in the same context. This sounds obvious, but it’s where most concept testing surveys break down.

Incoherent survey (common):

- Q1: What price would you pay? (three price tiers)

- Q2: How many bars in the box? (4-pack / 8-pack / 12-pack)

- Q3: Which protein source? (whey / pea / hemp)

This is incoherent because changing quantity and price simultaneously makes responses impossible to interpret. And if the product description says “plant protein,” asking about whey contradicts the brief.

Coherent survey:

- Q1: Primary claim — “11g Plant Protein” vs “Only 4 Ingredients” vs “USDA Organic” vs “<2g Sugar”

- Q2: Secondary claim — “Gluten-Free” vs “Non-GMO” vs “Whole30 Approved” vs “No Artificial Sweeteners”

- Q3: Flavour — “Macadamia Dark Chocolate” vs “Salted Caramel Cashew” vs “Coconut Almond”

- Q4: Wrapper messaging — “Clean fuel for your day” vs “Protein without the junk” vs “The bar with nothing to hide”

- Q5: Texture description — “Soft & chewy” vs “Crunchy” vs “Layered (crispy + chewy)”

All five questions are about the same product. They’re all things the NPD team is actually deciding. And they don’t contradict each other.

A Real Example: Protein Bar Claims Test

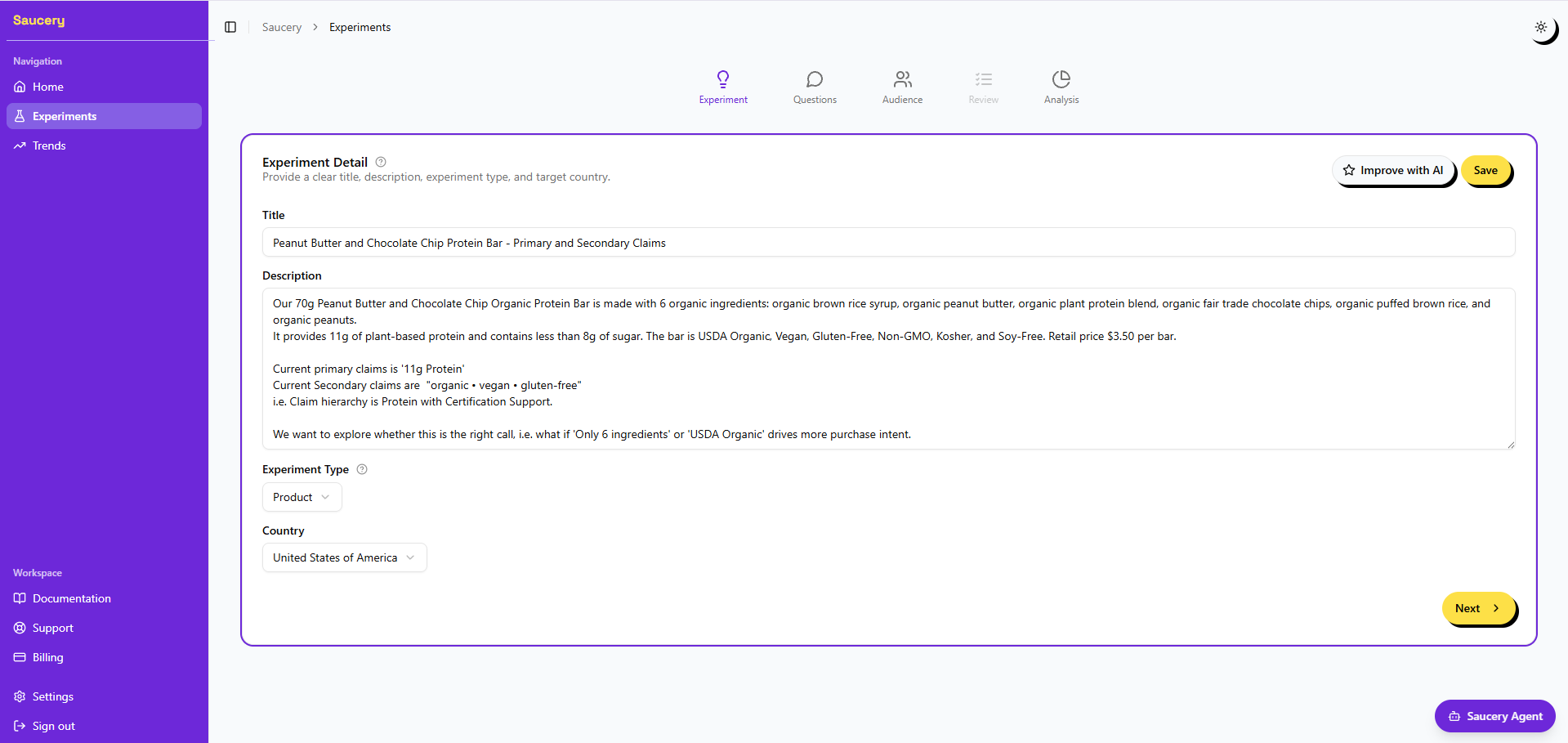

Here’s what a well-designed concept test looks like in practice. We configured this experiment in Saucery and had full results within two hours using 500 census-backed synthetic consumers.

Product brief (fixed): A 70g PB & Chocolate Chip Organic Protein Bar — 6 organic ingredients, 11g plant protein, less than 8g sugar.

Questions (variable):

- Ingredient transparency statement (5 options including “Only 6 Ingredients,” “Simple, Real Ingredients,” “Nothing to Hide”)

- Protein claim framing (4 options including “11g Protein Per Bar,” “Plant-Based Protein Power,” “Complete Amino Acid Profile”)

- Sugar & sweetness messaging (4 options including “Less Than 8g Sugar,” “No Added Sugar,” “Naturally Sweetened”)

- Lifestyle & dietary callout line (4 options including “Organic • Vegan • Gluten-Free,” “USDA Organic Certified,” “Non-GMO Project Verified”)

- Brand tagline / hero message (5 options including “Organic Energy, Simplified,” “Power Your Purpose”)

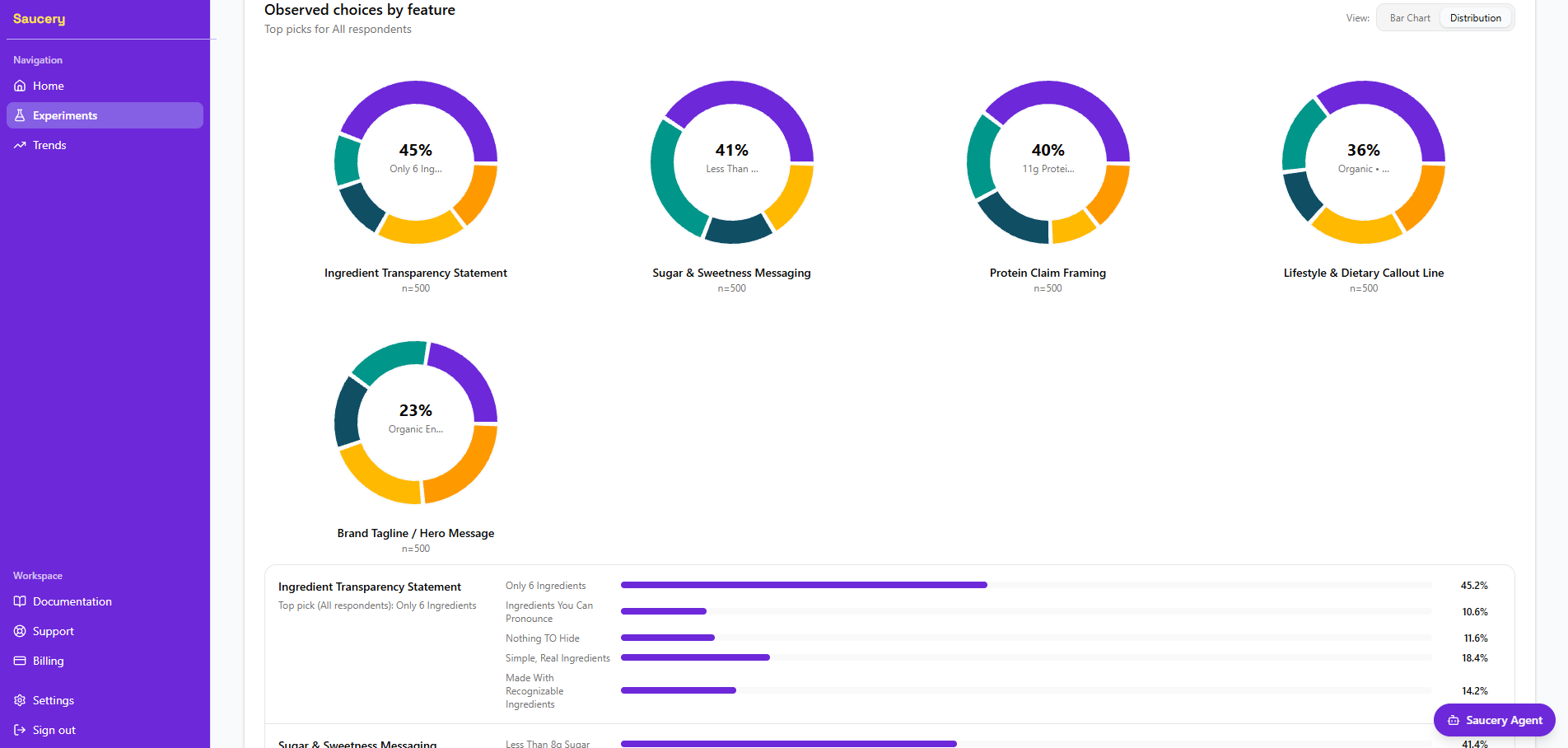

Key results:

| Question | Winning Claim | Preference | Lowest Claim | Preference |

|---|---|---|---|---|

| Ingredient transparency | “Only 6 Ingredients” | 45.2% | “Clean Label Certified” | 15.6% |

| Protein framing | “11g Protein Per Bar” | 40.4% | “Plant-Based Protein Power” | 17.6% |

| Sugar messaging | “Less Than 8g Sugar” | 41.4% | “Smart Sweetness, No Compromise” | 14.8% |

| Dietary callouts | “Organic • Vegan • GF” | 36.0% | “Made With Superfoods” | 16.2% |

| Brand tagline | “Organic Energy, Simplified” | 23.4% | “Power Your Purpose” | 21.2% |

Every question produced a clear winner with a statistically meaningful gap. The experiment took under two hours and cost a fraction of a traditional consumer panel — yet the methodology (discrete choice, randomised combinations, census-representative sample) is the same used by the major research firms.

How to Build Your First Concept Test

- Start with your actual product. Write the product description using your real SKU — real ingredients, real certifications. Don’t test abstract concepts; test the product you’re about to manufacture.

- Identify the one decision you need to make. Is it which claim should lead on pack? Which price point maximises revenue? Which flavour to launch next? Pick one.

- Write 3–5 realistic options for that decision. Each option should be something you could actually put on shelf. Avoid testing options you’d never use — they waste a slot and dilute the signal.

- Check coherence. Read all your questions back-to-back. Do they all make sense asked about the same product? Do any contradict the product description? If a question feels disconnected, it belongs in a separate experiment.

- Run the test with enough respondents. 500 consumers gives you stable preference shares across 3–5 options. Fewer than 300 and the differences between options may not be statistically reliable.

- Act on the results. Use the winning claims to brief your packaging designer. Use the attribute importance scores to prioritise your packaging real estate. The data replaces the debate.

Run Your Own Concept Test

Saucery runs discrete choice concept tests with 500+ census-backed synthetic consumers — typically in under two hours. Configure your product, set your questions, and get preference shares that directly inform your packaging brief. Start a free experiment.